When I was working for saving huge array in database, an idea came to mind to look into serialization against JSON. So I searched on internet and found that serialization technique is fast and I also read somewhere that unserialization for huge data is slow as compared to json_decode.

So, I made a program and do an experiment to find out the complexity for space and time of both methods with respect to the data size. Now, I am sharing my results with you. Let see.

My program generates the statistics for serialize/unserialize and JSON encode/decode and also size of serialized and JSON encoded data.

Let see the graphs.

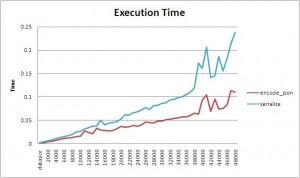

You can see that the json_encode() is very fast as compared to serialize(). But as we know that, encoding will be useless if decoding is slow for a method. So let see the decoding/unserialization graph.

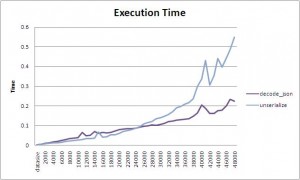

If you look for less data size, you will find that the process is fast for unserialization and for data size larger than 25000, JSON decoding is going to be faster as compared to unserialize. So, it means that if you are working for huge data than use json_decode().

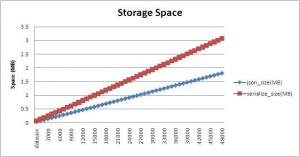

Ooopsss. . . space complexity for serialization method is too high.

Conclusion:

If you have small sized data then you can prefer serialization But for large data, serialization will be a worst choice if data is simple and just like an array.

I did this experiment for only arrays. May be results will be different for objects.

Program that I made for this experiment

ini_set('max_execution_time', 0);

function start_timer()

{

global $time_start;

return $time_start = microtime(true);

}

function end_timer()

{

global $time_start;

$totaltime = microtime(true) - $time_start;

echo 'Process completed in ' . $totaltime*1000 . ' ms</br>';

return $totaltime;

}

function get_random_string($valid_chars, $length)

{

$random_string = "";

$num_valid_chars = strlen($valid_chars);

for ($i = 0; $i < $length; $i++){

$random_pick = mt_rand(1, $num_valid_chars);

$random_char = $valid_chars[$random_pick-1];

$random_string .= $random_char;

}

return $random_string;

}

function save_csv($data)

{

$csvstring = implode(array_keys($data[0]),',')."\n";;

foreach($data as $v)

{

$csvstring .= implode($v,',')."\n";

}

file_put_contents('test_'.time().'.csv',$csvstring);

}

function runtest($datasize)

{

$stats_row = array();

echo "<u>Making Test Data of size $datasize</u></br>";

$array = array();

for($i=0; $i<$datasize; $i++)

{

$array[] = array('id'=>$i,

'text'=>get_random_string('abcdefghi',16)

);

}

$stats_row['datasize'] = $datasize;

start_timer();

echo '<u>Encoding in Json</u></br>';

$jsonencodeddata = json_encode($array);

$stats_row['encode_json'] = end_timer();

$f = 'tmp/'.$datasize.'_json.dat';

file_put_contents($f,$jsonencodeddata);

$stats_row['json_size(MB)'] = filesize($f)/1048576;

start_timer();

echo '<u>Decoding from Json</u></br>';

$jsondecodeddata = json_decode($jsonencodeddata);

$stats_row['decode_json'] = end_timer();

start_timer();

echo '<u>Serialization of data</u></br>';

$serializeddata = serialize($array);

$stats_row['serialize'] = end_timer();

$f = 'tmp/'.$datasize.'_serialize.dat';

file_put_contents($f,$serializeddata);

$stats_row['serialize_size(MB)'] = filesize($f)/1048576;

start_timer();

echo '<u>Unserialization of data</u></br>';

$unserializeddata = unserialize($serializeddata);

$stats_row['unserialize'] = end_timer();

return $stats_row;

}

$stats = array();

$files = glob('tmp/*'); // get all file names

foreach($files as $file){ // iterate files

if(is_file($file))

unlink($file); // delete file

}

for($i=1000; $i<50000; $i+=1000)

{

$stats[] = runtest($i);

echo '<hr>';

}

save_csv($stats);

If I am wrong at any place then guide me by placing a comment.

Thanks.

Thanks for sharing this, Good research

Thanks for sharing your experiment.It was really helpful.

Thanks for sharing. I have really saved my time.

Thanks for sharing this. It inspired me to check against the php7, so I’ve modified your script just a bit, mostly the HTML replaced with CLI coloring and one more 150k suite. You can find that on https://github.com/jakubwrona/tests containing also the results for dockerized php5.6 and installed php7. Quick summary, except php7 being lots faster, which is nothing surprising, it looks like the serialize/unserialize has been improved a lot in php7 and seems faster than the json encode/decode combo.

Thanks Jakub. Its really nice to see you have tested it with PHP7 as well. I will also do a run on PHP7 and update it with the charts as well soon.